Carol, a 28 year-old freelance dance teacher and spiritual seeker, living in a one-room apartment in east London, uses Facebook extensively to advertise her business and get technical help for her dance shows. She also takes part in online activities related to personality development like quizzes advertised through Facebook. In mid-March 2018, The Guardian along with The New York Times and The Observer reported that Cambridge Analytica, a data analytics firm, had misused the data of 50 million Facebook users. Carol, felt that she has been let down by her data fiduciaries.

At the heart of the Facebook-Cambridge Analytica scandal lie the shady data collection practices of a psychology researcher. The researcher wanted to study the personality traits of social media users, and subsequently a personality quiz was designed based on the presumably anonymized data disclosed by Facebook. More than 200,000 people participated in his study and their data was collected. However, improper analysis of the risk and benefits of the research have jeopardized the privacy of many individuals.

The example demonstrates the need to question the privacy guarantees that researchers promise their study participants.

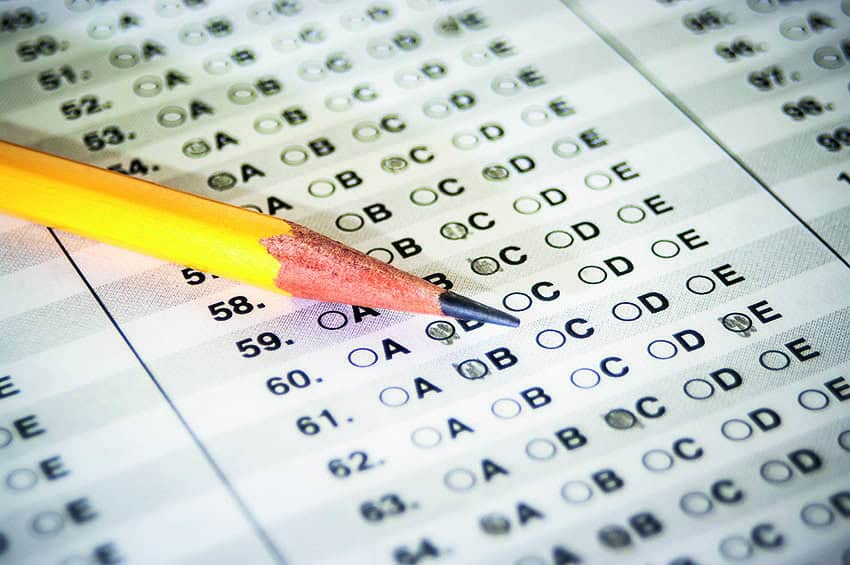

Typically, ethical concerns around data collection, data dissemination and respecting participant rights are addressed directly through, informed consent and indirectly through data anonymization measures as well as through internal review board approval.

Informed consent is a formal procedure designed to make sure that the participants have voluntarily given their permission to take part in the study in question.

Before obtaining informed consent, the researchers usually present study participants with information about the implications of their action in relation to the research study. However, there exists a degree of ambiguity in terms of what constitutes informed consent.

Legally speaking, when a participant gives informed consent he or she gives the researchers permission to study him/her.

Nevertheless, for the most part, the objectiveness of the ”informed” part of the consent is inferred. One of the key factors in getting the informed consent part right is getting the risk and benefit analysis of the research right. However, in the era of social media and data mining, the risk and benefits of a research project cannot be assessed in isolation.

The risk-screening tool available for researchers is not sophisticated enough to assess the risks of data aggregation and data inference, which occurs when publicly released data from multiple data sources is combined.

The most common data anonymization measures that researchers use are the removal of explicit identifiers from the research data and using tokens instead of real identifiers. However, Professor Latanya Sweeney at Harvard University, Arvind Narayanan, Computer Scientist at Princeton University, and others have shown that these naïve anonymization techniques to be ineffective.

Sweeney showed in her research that by just knowing the age, gender, and zip code of a person, almost 87 % of the US population could be identified. The demographics section of most research papers contains these attributes.

In isolation, these are non-sensitive attributes but when combined with publicly available data, the identity of those participating in the research study could be exposed.

This is not the worst nightmare for researchers in publishing their research data. Researchers John S. Brownstein and collaborators showed that from the statistical plots used in health research articles, the participants of the respective studies can be re-identified.

The underlying construct of de-identification is based on the identity of a person, but what constitutes identity is blurred in the age of information overload.

If e.g., a sample population of dancers from the state of Ohio for the study of HIV contains just one male artist, then his gender becomes his identity. Hence, this model of de-identification is much like an arms race between the data publishers and the greedy data miners who try to re-identify individuals from publicly available data. It is challenging to know what identifies a person in a dataset, and to guage what background knowledge an adversary possesses.

Corresponding with the Internal Review Board (IRB) is another way for a researcher to show that the research project preserves the privacy of the study participants.

The IRB appraises a research project by analyzing the risks and benefits of the research for the human subjects involved in the research. Again, the risk and benefit analyses by the ethics review boards are not up to speed with technology development, and are thus unable to correctly assess the risks of data aggregation. Further, the ethics review board works on the information provided by the researchers to conduct the risks and benefits analysis. Hence, if the researchers haven’t gotten the risks and risk reduction measures right then the correspondence with the IRB won’t add much.

One promising model for releasing aggregate statistics of an underlying population sample, is a privacy standard called Differential Privacy. The underlying idea behind this standard is to minimize the risk of private information disclosure due to participation in a research study.

One way of safeguarding the private information leak using differential privacy is to add random noise, which masks the evidence of someone’s influence or non-influence in terms of the statistical result. This means that statistical results of a study remain almost the same whether or not any one individual participates or refrains from participating in the study. The inability to distinguish the study participants’ private information from the study result is more robust against the re-identification risks than the naïve anonymization measures prescribed above.

Social media applications, advancement in technology such as data mining and machine learning have all blurred the way people think about their digital life and physical lives. However, privacy practices in research studies do not seem to be keeping pace with the advancements in technology. This will have adverse effects such as study participants losing their trust and motivation to take part in research.

Hence, the research community needs to shift the way it thinks about preserving the privacy of those participating in research studies. There are plethora of results produced by the research community to enable data analysis and data publishing without violating the privacy of the individuals. Now, the time is right for the research community to embrace those results and tools when dealing with research projects that involve human subjects.

Jenni Reuben

PhD Candidate in Computer

Science, Karlstad University

Further reading: Latanya Sweeney’s Weaving technology and policy together to maintain confidentiality – in the Journal of law, medicine and ethics.